To scale high throughput, flume has the notion of channels selectors with the option to multiplex across multiple channels which allows for horizontal scalability. In order to achieve scale and resiliency, the Flume source hands messages off to a channel. Once compiled, the source jar is added to the plugins.d directory, a convenient way to organize custom code. For example, including MBean counters allows the source metrics to be displayed on existing dashboards. The source specific configurations followed the existing fume configuration patterns nicely. Much of the example code was modeled off of the Netcat implementation. The continuous stream of data aligned better with the EventDriven implementation.

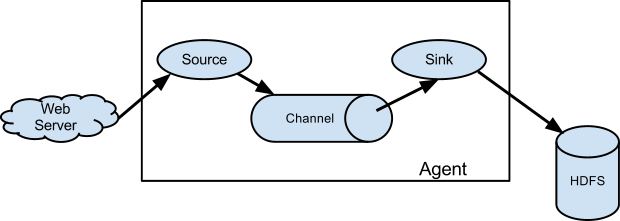

There are two implementation patterns to follow, Pollable or EventDriven. There are a lot of pre-implemented sources but none that could natively stream binary data produced at a URL endpoint, so a custom source implementation was required. The interface with the raw data is called a source. It is customizable enough to allow for inclusion in this evaluation. The first tool evaluated is a popular log-based data ingest platform, Flume. Streaming audio has a mild bitrate of 128Kb/s, but if one wanted to, for instance, record all radio stations within a listening zone, the aggregate would be substantial.Īll code for this post can be found on Github. It may not be feasible to serialize the data at that frequency and instead the focus on ingest will be pure capture and then push the serialization processing to a distributed system like Hadoop.įor this post, the ingests tools were put through their paces using audio ingest as an example use case. Consider, for example, a temperature sensor, with a resolution of 1000 readings per second. Obviously, a binary stream can also be broken down into discrete data points but instead of being event-based, the data is a continuous stream collected at a specific frequency.

The data can be serialized into discrete chunks based on the event. Most data, like usage logs, for example, are streams of text events that are a result of some action, like a user click. Future posts will continue to examine processing techniques as data makes its way through the Hadoop data pipeline including a look at Spark Streaming. The focus of this post will be on how three popular Hadoop ingest tools – Flume, Kafka, and Amazon’s Kineses – compare with respect to initial capture of this data, particularly on configuration, monitoring and scale. As discussed, big data will remove previous data storage constraints and allow streaming of raw sensor data at granularities dictated by the sensors themselves. The Internet of Things will put new demands on Hadoop ingest methods, specifically in its ability to capture raw sensor data - binary streams.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed